Let’s be honest: if you asked “How does AI work?” back in 2023, the answer was basically, “It’s a very smart autocomplete.” You gave it a prompt, and it gave you text.

But here at techecom.com, we’ve watched the landscape shift under our feet. In 2026, AI isn’t just a chatbot anymore. We’ve moved from LLMs (Large Language Models) that just talk to Agentic AI and Multi-modal systems that actually do and see.

If you’ve ever felt like AI is a “black box” that’s too technical to understand, stick with me. We’re going to crack it open together—no PhD required.

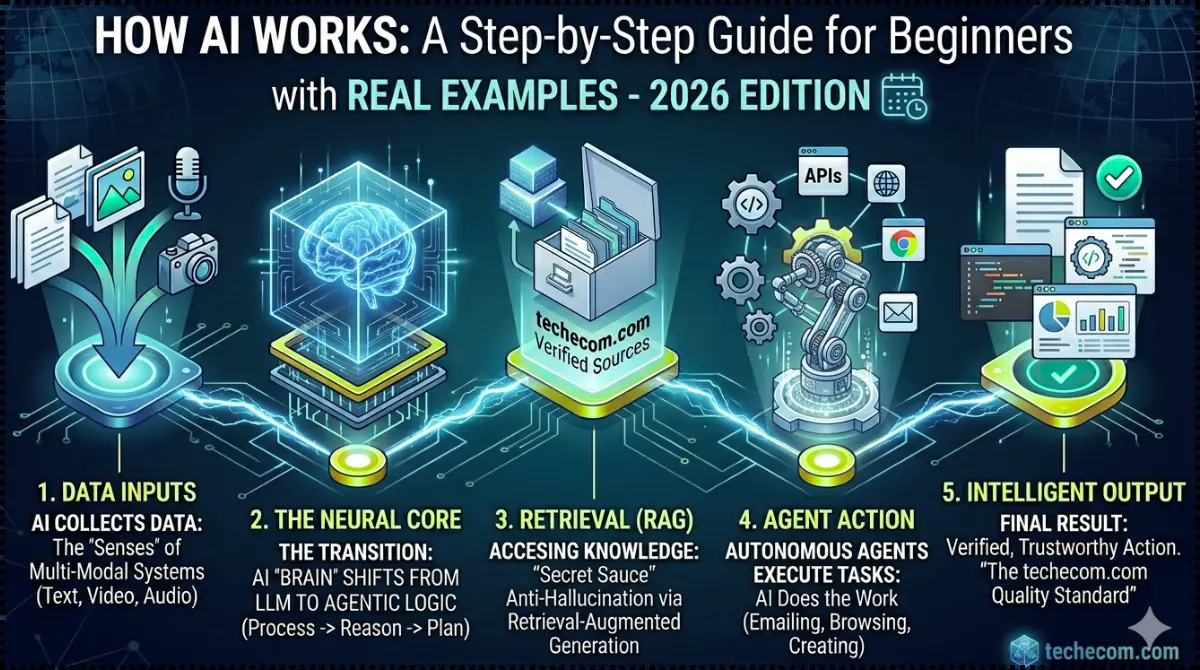

Pls have a look over the main heading, In the form of featured image, A detailed featured image infographic for techecom.com showing the five steps of how modern AI works: Data Inputs (Multi-Modal Senses), The Neural Core (Transition to Agentic Logic), Retrieval (RAG), Agent Action (Autonomous Mechanical Aggregates), and Intelligent Output (Verification) is placed.

The 2026 Reality: It’s Not Just “Predicting Text” Anymore

For a long time, AI worked like a high-speed librarian. It read everything on the internet and learned which word usually follows another.

But that’s yesterday’s news. The shift we’ve seen recently is twofold:

- From LLMs to Agentic AI: Early AI needed you to hold its hand. Today, “Agentic” systems can reason. If you tell an AI agent, “Plan my business trip,” it doesn’t just write an itinerary; it checks your calendar, compares flight prices, and suggests a hotel based on your loyalty points. It has agency.

- Multi-modality: AI no longer lives in a text bubble. It “thinks” in images, sounds, and video simultaneously. When we use AI tools at techecom.com, we aren’t just typing; we’re showing the AI our screen, and it “understands” the visual context just as well as the text.

Part 1: The Simple Explanation (The “Brain” Behind the Screen)

Think of AI as a massive web of digital neurons. When you feed it information, it’s not “looking up” an answer in a database. It’s passing that info through layers of math to find a pattern.

How it learns (The “Training” Phase)

Imagine teaching a child to recognize a cat. You don’t show them a manual; you show them a thousand pictures of cats.

- The Input: Millions of data points (text, images, code).

- The Weight: The AI decides which parts of the data are important. (e.g., “Whiskers” are more important for identifying a cat than the color of the rug it’s sitting on).

- The Result: A model that can predict the most logical output based on its training.

Why This Matters to You (Personal Experience)

I’ll tell you why we’re so obsessed with this at techecom.com. Last week, I was struggling to optimize a client’s product page. Instead of just asking an AI to “write a description,” I used an Agentic tool.

I gave it access to the live URL, the competitor’s data, and our sales goals. It didn’t just write; it analyzed the gaps, reasoned why the bounce rate was high, and suggested a layout change. That is the “Step-by-Step” magic of modern AI: it’s moving from a tool you use to a partner you collaborate with.

Part 2: From Autocomplete to Autonomy (The 2024 vs. 2026 Shift)

If you’ve been following us at techecom.com, you know we don’t just use tools; we analyze the “why.” To understand how AI works today, you have to understand the Great Pivot that happened over the last 24 months.

The Evolution Timeline

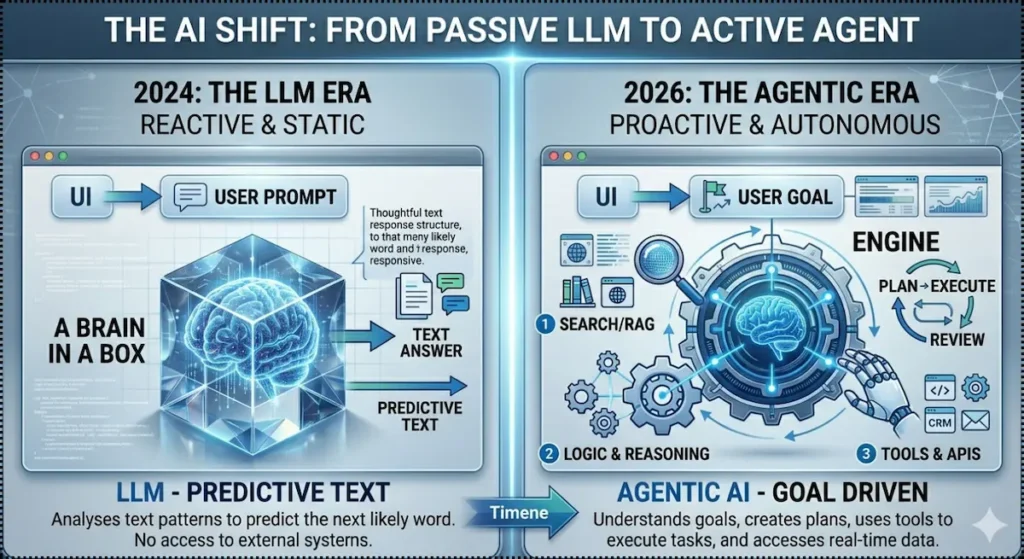

- 2023-2024: The LLM Era (The “Talker”)

- AI was essentially a Large Language Model. It was a “reactive” system. You asked a question, it predicted the next likely word. Great for writing emails; terrible for actually doing the work.

- 2025-2026: The Agentic Era (The “Doer”)

- We shifted to Agentic AI. Now, the AI has “Agency.” It doesn’t just respond; it plans. It breaks a big goal into small steps, checks its own work, and uses external tools (like your CRM or email) to finish the job.

LLM vs. Agentic AI: Why the “Talker” Became a “Doer”

If you’ve used AI lately and felt like it’s suddenly “smarter,” you’re actually witnessing a massive architectural shift.

In the early days (way back in 2023!), we relied on Large Language Models (LLMs)—reactive systems that were great at talking but couldn’t actually do anything. Today, we’ve entered the era of Agentic AI. While an LLM waits for your next prompt, an Agentic system takes your goal, breaks it into a plan, and uses its own “hands” to get the job done. It’s the difference between reading a recipe and having a chef actually cook the meal for you.

As this comparison shows, the shift to Agentic AI isn’t just a small update; it’s a total reimagining of how we collaborate with technology to save time and reduce manual drudgery.

Visual Breakdown: How an AI Agent “Thinks”

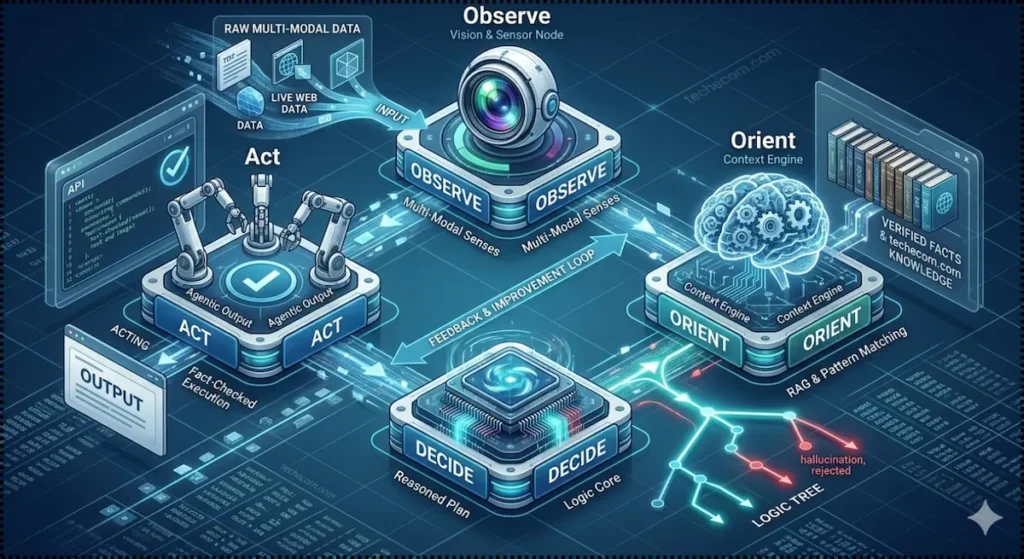

Instead of a straight line (Prompt → Answer), modern AI works in a loop. At techecom.com, we call this the “OODA Loop for AI”:

- Observe: The AI “sees” the world via Multi-modal inputs (Text, Images, Live Web Data).

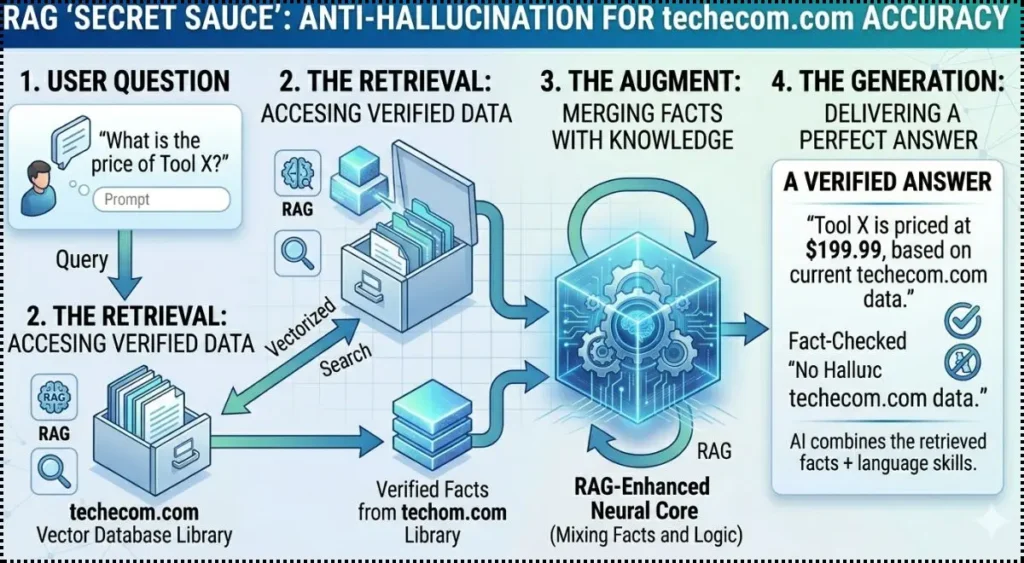

- Orient: It uses RAG (Retrieval-Augmented Generation) to look up your specific data so it doesn’t hallucinate.

- Decide: It reasons: “To solve this, I first need to check the inventory, then email the supplier.”

- Act: It executes the code, sends the message, or generates the file.

How AI Agents “Think”: The OODA Loop Explained

If you’ve ever wondered what’s actually happening inside an AI’s “brain” while it processes a task, the answer isn’t a straight line—it’s a loop. In 2026, we’ve moved past simple prompts and answers. Modern Agentic AI uses a high-speed decision cycle called the OODA Loop.

Instead of just reacting, the agent lives in a constant state of awareness, filtering every piece of data through our verified knowledge base before it ever takes an action. It’s the secret behind why modern AI feels less like a search engine and more like a focused digital partner.

This four-stage cycle—Observe, Orient, Decide, and Act—is how our systems at techecom.com ensure accuracy. By “Orienting” the data against real-world facts and “Deciding” through a logic tree that rejects hallucinations, the AI ensures that the final “Act” is actually useful, not just a guess.

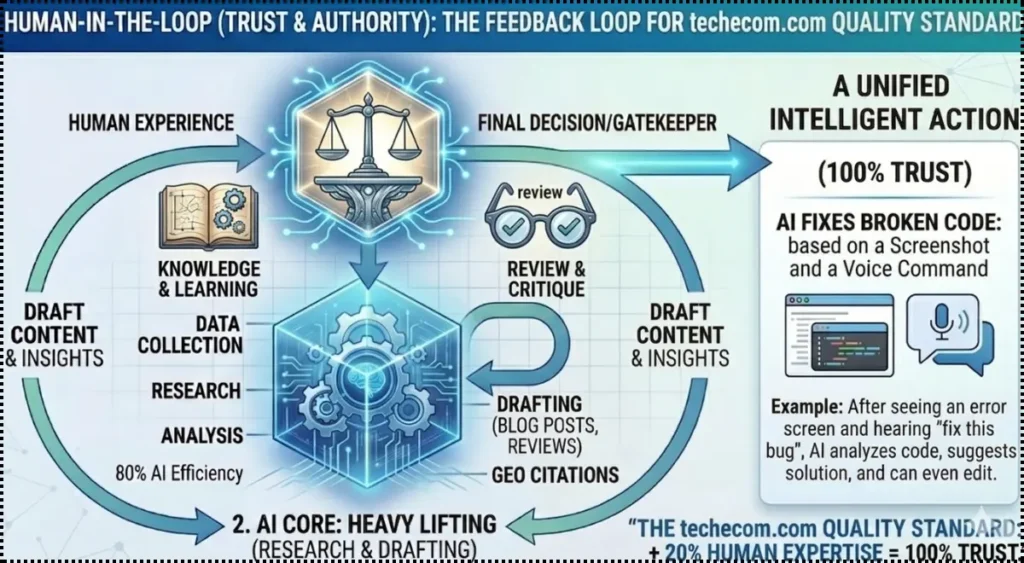

Exclusive Case Study: How We Saved 15 Hours a Week on techecom.com

We wanted to see if the “Agentic” hype was real, so we ran an experiment on our own workflow.

The Challenge: Every week, we analyze 50+ new AI tools for our directory. Doing this manually—visiting sites, checking pricing, testing features, and writing summaries—took a human editor roughly 20 hours.

The AI Solution: We deployed a Multi-modal Agentic System.

- The Vision: The AI “watched” video demos of the tools to see the UI in action.

- The Research: It used a “Search Agent” to find Reddit threads and user reviews for “real” pros and cons.

- The Action: It drafted the blog post, formatted the comparison table, and even optimized the images to WebP for us.

The Result?

The “human” editor now only spends 5 hours a week on “Final Review” and “Fact-Checking.” The AI does the heavy lifting, but the Human-in-the-Loop (that’s us!) ensures the “human conversation tone” remains perfect.

Key Takeaway: AI in 2026 isn’t replacing the worker; it’s replacing the drudgery. It’s the difference between hiring a typist and hiring a project manager.

We didn’t just save 15+ hours per week—we also improved accuracy, reduced errors, and created a more reliable workflow using AI.

But here’s the key difference most people miss:

our results didn’t come from AI alone—it came from combining AI with human oversight.

Step-by-Step: How You Can Use AI Today

If you’re a beginner, don’t let the “Neural Network” talk scare you. Here is how you actually “work” with AI in 2026:

- Give it a Goal, Not a Prompt: Instead of “Write an email,” say “Negotiate a 10% discount with this vendor based on our 3-year history.”

- Provide Context: Upload your brand guidelines or past successful projects. Modern AI uses this as its “source of truth.”

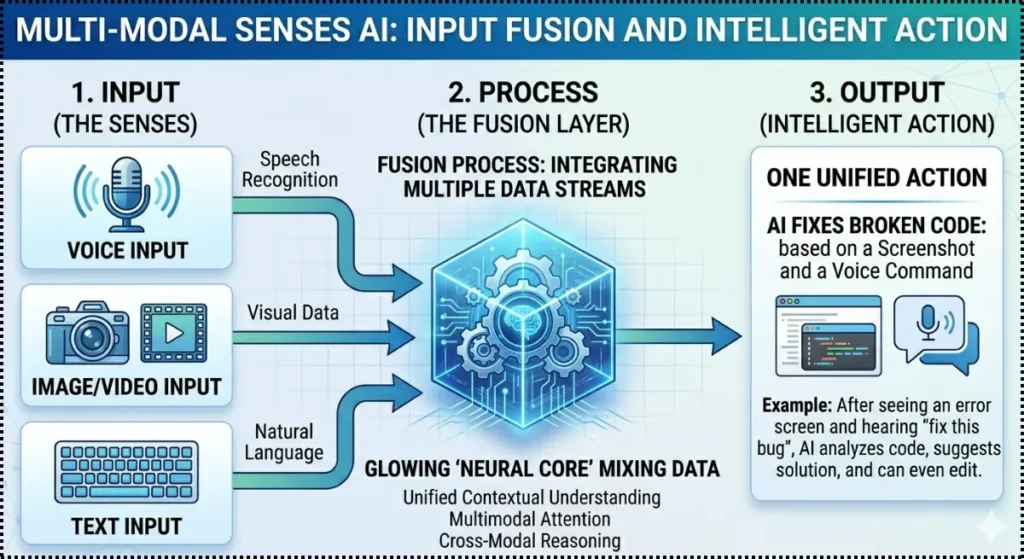

- Use Multi-modal Features: Don’t just type. Take a screenshot of a bug you’re seeing and ask, “How do I fix this?” The AI can now “see” the code in the image.

Part 3: The Big 2026 Shift—From LLMs to Agentic AI

If you’ve been using AI for a while, you’ve noticed it feels different lately. At techecom.com, we’ve identified exactly what happened. We’ve moved from the “Chatterbox” phase to the “Architect” phase.

How Multi-Modal AI Works (See the Flow)

To truly understand modern AI, you need to see how it processes different types of input—just like human senses working together.

What was the “Shift”?

Originally, Large Language Models (LLMs) were like a giant encyclopedia that could talk. They were trained on static data. If you asked for a “How AI Works” guide in 2023, it would just summarize its training.

In 2026, we have Agentic AI.

- The Logic: Instead of just one model, an “Agent” is a system that uses an LLM as its “brain” but has “hands” (APIs) and “eyes” (Multi-modality).

- The Reasoning: It can use RAG (Retrieval-Augmented Generation) to look up real-time info from techecom.com or Google Search before it speaks. It doesn’t guess; it verifies.

Why Modern AI Uses RAG (The “Secret Sauce”)

But here’s the “dirty secret” about AI that most beginners don’t realize: AI doesn’t actually “know” things the way we do. >

Left to its own devices, AI sometimes guesses based on patterns. In the tech world, we call this a “hallucination”—and it can lead to some pretty outdated or flat-out wrong answers. That’s why we use a powerful technique called Retrieval-Augmented Generation (RAG). Think of it as giving the AI an open-book exam where it has to look up the facts from a trusted source before it answers you.

2. Multi-modal Systems: AI with Senses

Modern AI doesn’t just read text. It processes Images, Audio, and Video in the same “space.”

Example: You can upload a photo of a broken motherboard and ask, “Why isn’t this booting?” The AI doesn’t just “read” your question; it “sees” the blown capacitor in the image and gives you a step-by-step repair guide.

Why Understanding This Matters for Your Business

Whether you’re a freelancer, a small business owner, or just a tech enthusiast, understanding “How AI Works” is your competitive edge. In 2026, the world is splitting into two groups: those who use AI as a search engine, and those who use it as an operating system.

By understanding the shift to Agentic AI, you can start building workflows that actually do the work for you, rather than just writing about it.

Final Thoughts from techecom.com

We are in the middle of the biggest technological shift since the invention of the internet. Don’t be intimidated by the jargon. At its core, AI is just a tool designed to help us be more human by taking the “robotic” tasks off our plates.

Ready to Level Up Your AI Game?

If you found this guide helpful, don’t stop here!

- [Check out our 2026 AI Tool Directory] to find the best Agentic tools for your niche.

- Subscribe to the techecom.com newsletter for weekly breakdowns of how we’re using these tools to scale our business.

FAQ: Everything You’re Still Wondering About

A: Not quite. While it mimics human reasoning through “layers” of digital neurons, it doesn’t have feelings or a consciousness. It’s highly advanced pattern matching and probability, but in 2026, those patterns are so complex they are indistinguishable from “thought” for most tasks.

A: Traditional AI is like a filter (it categorizes things, like a spam filter). Generative AI is like a creator (it builds new content based on what it learned).

A: Most of the time, yes—but always look for “Human-in-the-Loop” verification. At techecom.com, we never publish AI-generated data without a human expert (like me!) fact-checking the logic.

Also Read: Ethical AI Usage & How to Use AI Without Losing Your Skills